Cybersecurity Defense Strategies

Cybersecurity Defense Strategies

Translation pending

English content for this post is not available yet.

How to Prevent Cyber Attacks?

Once you establish an active Web presence, you put a target on your company's back. Like the unfortunate insect caught in a spider's web, the size of your company determines the extent of disruption you create on the Web and how quickly you get noticed by the bad guys. How attractive you are as prey is often directly proportional to what you can offer a predator. If your business is an e-commerce site that thrives on credit cards or other financial information, or a company with valuable secrets to steal, your attractiveness quotient increases; You have more value to steal. And if your business is new and your presence on the Web is new, it can be assumed that perhaps you are not yet versed in the intricacies of cyber warfare and therefore more vulnerable to an attack.

Unfortunately, most of those who want to infiltrate your network defenses are trained, motivated, and highly intelligent at developing faster and more effective methods to sneak around your environment by checking for the smallest vulnerabilities. Most IT professionals know that a business's firewall is constantly probed for weaknesses and vulnerabilities by attackers from all corners of the world. Anyone who follows software-related news understands that seemingly every few months, news breaks about a new, exploitable vulnerability in an operating system or application. It is widely known that even the most knowledgeable network administrator or programmer writing the software cannot find and close all the vulnerabilities in today's increasingly complex software.

Errors exist in applications, operating systems, server processes (daemons), and clients. System configurations can also be exploited, such as not changing the default administrator password or accepting default system settings, or unintentionally leaving a gaping hole by configuring the machine to run in an unsecured mode. Even the Transmission Control Protocol/Internet Protocol (TCP/IP), the foundation on which all Internet traffic runs, can be exploited because the protocol was designed before the threat of hacking was truly widespread. Therefore, it contains design flaws that could, for example, allow a hacker to easily change IP data.

When word gets out (and there will be) that there is a new exploitable vulnerability in an application, crackers around the world start scanning sites on the internet, looking for any sites that have the vulnerability in question.

The fact that many vulnerabilities in your network can be caused by your employees makes your job even more difficult. Casual browsing of porn sites can expose the network to all kinds of nasty bugs and malicious code just by an employee visiting the site. The problem is, for users this may not seem like that big of a deal. They either don't realize or don't care that they're leaving the network open to intrusions.

1. So, what is Intrusion?

A Network Attack is an unauthorized entry into a computer in your organization or an address within your assigned domain. Intrusion can be passive (infiltration stealthily and undetected) or active (in which changes to network resources are affected). Intrusions can come from outside or inside your network structure (an employee, customer or business partner). Some intrusions are just to let you know that the intruder is there, defacing your website with various messages or vulgar images. Others are more malicious, attempting to obtain critical information on a one-off basis or as an ongoing parasitic affair that will continue to siphon data until discovered. Some intruders attempt to inject elaborate code to crack passwords, record keystrokes, or impersonate your site to redirect unsuspecting users to their site. Others embed themselves in the network and constantly silently extract data or replace public Web pages with various messages.

An attacker can enter your system physically (by gaining physical access to a restricted machine and its hard drive and/or BIOS), externally (by attacking your Web servers or finding a way to bypass your firewall), or internally (by your own users, customers, or partners)

2. Sobering Figures

So how often do these intrusions occur? Predictions are correctIt's staggering: Depending on which reporting organization you listen to, there were between 79 million and 160 million electronic data breaches worldwide between 2007 and 2008. U.S. government statistics show that there were an estimated 37,000 incidents known and reported against federal systems in 2007 alone, and this number is expected to increase as the tools used by crackers become increasingly sophisticated.

In one case, the credit and debit card information of more than 45 million users was stolen from a large merchant in 2005, and the data of a further 130,000 people was stolen in 2006. Sellers reported that this loss would cost them an estimated $5 million.

Spam remains one of the biggest problems facing businesses today and is increasing steadily every year. "Compromise of innocent machines via e-mail and Web-based infections continued in Q3, with more than 5,000 new zombies being created every hour," reads an Internet threat report published by Secure Computing Corporation in October 2008. In the 2008 election year, election-related spam was estimated to exceed 100 million messages per day.

Malware use is also steadily increasing, with "nearly 60% of all malware-infected URLs" coming from the US and China, according to research by Secure Computing. Web-related attacks will become more common, with political and financial attacks being at the top of the list. Secure Computing's research estimates that with the increasing availability of Web attack toolkits, "approximately half of all Web-borne attacks are likely to be hosted on compromised legitimate Web sites."

Whatever the purpose of the intrusionâ€"entertainment, greed, bragging rights, or data theftâ€"the result is the same: a weakness in your network security has been identified and exploited. And unless you discover this weakness, the point of intrusion, it will remain an open door into your environment. So, who is out there trying to get into your network?

3. Know Your Enemy: Hackers vs. Crackers

A community of people who are experts in programming and computer networks and who excel at solving complex problems has existed since the early days of computing. The origin of the term hacker goes back to the members of this culture, and they are quick to point out that it is hackers who set up and run the Internet and created the Unix operating system. Hackers see themselves as members of a community that builds things and makes them work. And the term cracker is a badge of honor for those in their culture.

If you ask a traditional hacker about people who sneak into computer systems to steal data or cause damage, they will most likely correct you by saying that these people are not real hackers. (The term used for these types in the hacker community is cracker, and these two labels are not synonymous). Therefore, to avoid offending traditional hackers, I will use the term cracker and focus on them and their efforts.

From the lone wolf cracker looking to gain the admiration of his peers, to the disgruntled ex-employee seeking revenge, or the deep pockets and seemingly limitless resources of a hostile government determined to take down wealthy capitalists, crackers are out to find the chink in your system's defensive armor.

A cracker's specialty â€" or, in some cases, his mission in life â€" is to seek out and exploit the vulnerabilities of an individual computer or network for his own purposes. Crackers' intentions are normally malicious and/or criminal. They have a vast library of knowledge designed to help them hone their tactics, skills and knowledge, and can draw on the almost limitless experience of other crackers through a community of like-minded individuals who share knowledge through underground networks.

They often begin this life by learning the most basic of skills: software programming. The ability to write code that can make a computer do what you want is seductive in itself. As they learn more about programming, they expand their knowledge of operating systems and, as a natural progression, their weaknesses. They also quickly learned that they needed to learn HTML to expand the scope and type of their illegal business.They swindle â€" the code allows them to create fake Web pages that lure unsuspecting users into revealing sensitive financial or personal data.

These new breakers have extensive underground organizations they can turn to for information. They hold meetings, write articles, and develop tools that they pass on to each other. Each new acquaintance they make strengthens their skills and trains them to move into increasingly sophisticated techniques. Once they reach a certain level of proficiency, they start their trade in earnest.

They start by researching potential target companies on the Internet (an invaluable source of all kinds of information about the corporate network). Once a target is identified, they can quietly sneak around, searching for old forgotten backdoors and operating system vulnerabilities. They can start simply and harmlessly by running basic DNS queries that can provide IP addresses (or IP address ranges) as a starting point to launch an attack. They can sit back and listen for incoming and/or outgoing traffic, log IP addresses, and test for vulnerabilities by pinging various devices or users.

They may secretly plant password cracking or logging applications, keystroke loggers, or other malware designed to keep their unauthorized connections alive and profitable.

Cracker wants to act like a cyber-ninja, sneaking up and infiltrating your network without leaving a trace. Some more experienced crackers may put multiple layers of mostly compromised machines between themselves and your network to hide their activities. Like standing in a room full of mirrors, the attack seems to come from so many places that you can't tell reality from ghost. And before you know what they're doing, they're gone like smoke in the wind.

4. Motives

While the goal is the sameâ€"to infiltrate your network defensesâ€"hackers' motivations often differ. In some cases, intrusion into a network can be done from the inside by a disgruntled employee who wants to harm the organization or steal company secrets for profit.

There are large cracker groups that work diligently to steal credit card information and then offer it for sale. They want to grab and run quickly â€" take what they want and leave. Their cousins ​​are network parasites â€" those who silently breach your network and then sit there siphoning off data.

A new and very disturbing trend is the discovery that some governments are funding digital attacks on the network resources of both federal and corporate systems. Various agencies, from the US Department of Defense to the governments of New Zealand, France and Germany, have reported attacks from unidentified Chinese hacking groups. It should be noted that the Chinese government denies any involvement and there is no evidence of any involvement or involvement. Additionally, in October 2008, the South Korean Prime Minister reportedly issued a warning to his cabinet that "approximately 130,000 pieces of government information have been hacked [by North Korean hackers] in the past four years."

5. Tools

Today, crackers are armed with an increasingly sophisticated and well-stocked set of tools to do what they do. Just like professional thieves with their custom-made picks, crackers today can acquire an intimidating array of tools to secretly test vulnerabilities in your network. These tools range from simple password-stealing malware and keystroke loggers to methods of injecting sophisticated parasite strings that copy data streams from customers seeking to conduct e-commerce transactions with your company. Some of the more commonly used tools include:

- Wireless sniffers, these devices not only detect the location of wireless signals within a certain range, but can also sniff out data transmitted through the signals. With the increasing popularity and use of remote wireless devices, this practice is increasingly responsible for the loss of critical data and poses a significant headache for IT departments.

- Once packet sniffers are inserted into a network data stream, they passively analyze data packets entering and exiting a network interface and utilities capture data packets passing through a network interface.

- Port scanners are a good analogy for these tools, obviouslyIt is a thief watching the neighborhood looking for an unlocked door. These tools send consecutive, sequential connection requests to the target system's ports to see which ones are responding to the request or are open. Some port scanners allow an attacker to slow down the port scanning rate â€" sending connection requests over a longer period of time â€" so that the intrusion attempt is less likely to be detected. The usual targets for these devices are old, forgotten "back doors" or ports that were accidentally left unprotected after network changes.

- Port knocking, sometimes network administrators create a secret backdoor method to bypass firewall-protected ports â€" a secret knock that gives them quick access to the network. Port knocking tools find these unprotected ports and insert a Trojan horse that listens to network traffic for evidence of this sneak peek.

- Keystroke loggers, these are spyware programs that are placed on vulnerable systems and record the user's keystrokes. Frankly, if someone can sit back and record every keystroke a user makes, it wouldn't take long to obtain things like usernames, passwords, and ID numbers.

- Remote administration tools are programs that are placed on an unsuspecting user's system and allow the hacker to take control of that system.

- Network scanners investigate networks to see the number and type of host systems on a network, the services available, the host's operating system, and the type of packet filtering or firewalls used.

- Password crackers, these sniff networks for data streams associated with passwords and then use brute force to peel away the layers of encryption that protect those passwords.

6. Boots

A new and particularly virulent threat that has emerged in the last few years is one in which a virus is secretly implanted into a large number of unprotected computers (usually those located in homes), hijacking them (without their owners' knowledge) and turning them into slaves to do the hacker's bidding. These compromised computers, known as bots, connect to large and often untraceable networks called botnets. Botnets are designed to work so that instructions come from a central computer and are quickly shared among other botted computers on the network. Newer botnets now use a "peer-to-peer" method, making detection difficult, if not impossible, by law enforcement because they lack a central identifiable control point. And because they often cross international borders into countries that lack the means (or will) to investigate and shut them down, they can grow at an alarming rate. They can be so lucrative that they have now become a tool of choice for hackers.

Botnets exist largely due to the number of users who do not comply with the basic principles of computer security (installed and/or up-to-date antivirus software, regular scans for suspicious codes, etc.) and thus become unwitting accomplices. Once compromised and "botted," their machines are turned into conduits through which large volumes of unwanted spam or malicious code can be rapidly distributed. Current estimates suggest that 40% of the 800 million computers on the Internet are being used by bots controlled by cyberthieves to spread new viruses, send unsolicited spam e-mail, crash Web sites with denial-of-service (DoS) attacks, or siphon sensitive user data from banking or shopping Web sites that look and behave like legitimate sites where customers have previously done business.

It's such a widespread problem that botnet attacks rose from an estimated 300,000 per day in August 2006 to over 7 million per day a year later, with more than 90% of those sent being spam emails, according to a report published by security firm Damballa. Even worse for e-commerce sites, there is a growing trend in which a site's operators are threatened with DoS attacks unless they pay protection money to a cyber extortionist. Those who refuse to negotiate with these terrorists see their sites fall prey to relentless rounds of cyber "carpet bombing."

Bot controllers operate networks that need a large and untraceable means of sending out large amounts of advertising, but who want to build their own network.They can also make money by renting it to others who do not have the financial or technical resources. To make matters worse, botnet technology can be found online for less than $100, making it relatively easy to start what could be a very lucrative business.

7. Signs of Intrusions

As mentioned before, your company's mere presence on the Web puts a target on your back. It's only a matter of time before you encounter the first attack. This could be something as seemingly innocent as a few failed login attempts, or it could be something as obvious as an attacker defacing your Web site or bringing down your network. It's important to go into this job knowing you're vulnerable.

Hackers will first look for known weaknesses in your operating system (OS) or the applications you use. They then start looking for holes, open ports, or forgotten backdoors, which are bugs in your security posture that can be quickly or easily exploited.

Probably one of the most common signs of an intrusion â€" whether attempted or successful â€" are repeated signs that someone is trying to exploit your organization's own security systems, and the tools you use to monitor suspicious network activity can actually be used against you quite effectively. Tools such as network security and file integrity scanners can be invaluable in helping you make ongoing assessments of your network's vulnerability and can also be used by hackers looking for a way in.

A large number of failed login attempts is also a good indication that your system has been targeted. The best penetration testing tools can be configured with interference thresholds that, when exceeded, will trigger an alert. They can passively distinguish between legitimate and suspicious activity of a recurring nature, monitor time intervals between activity (alerting when the number exceeds a threshold you specify), and create a database of signatures seen multiple times over a period of time.

The "human element" (your users) is a constant factor in your network operations. Users often enter the wrong answer, but they usually correct the mistake on the next try. However, a series of misspelled commands or incorrect login responses (along with attempts to recover or reuse them) can be a sign of brute force attack attempts.

Packet inconsistenciesâ€"direction (incoming or outgoing), source address or location, and session characteristics (incoming sessions and outgoing sessions)â€"can also be good indicators of an attack. If a packet has an unusual source or is directed to an abnormal port (for example, an inconsistent service request), this may be a sign of a random system scan. Packets with local network addresses coming from outside and requesting service inside may be a sign of IP spoofing.

Sometimes strange or unexpected system behavior is a sign in itself. Although this is sometimes difficult to keep track of, you should be aware of events such as changes in system clocks, servers shutting down or server processes inexplicably stopping (via attempts to restart the system), system resource issues (such as unusually high CPU activity or overflows in file systems), audit logs behaving in strange ways (reducing in size without administrative intervention), or unexpected user access to resources. Heavy system usage (possible DoS attack) or CPU usage (brute force password cracking attempts) should always be investigated if you notice unusual activity at regular times on certain days.

8. What Can You Do?

It goes without saying that the most secure network, that is, the network that is least likely to be compromised, is the one that does not have a direct connection to the outside world. But this isn't a very practical solution, because the only reason you have a Web presence is to do business. And in the Internet trading game, your biggest concern is not the sheep moving in, but the wolves dressed as sheep moving in with them. So how do you strike an acceptable balance between keeping your network free of intrusions and accessible at the same time?

As your company's network administrator, you walk a fine line between network security and user needs. A good defense that still allows accessYou must have it. Users and customers can be both the lifeblood of your business and the largest potential source of infection. Additionally, if your business thrives by allowing users access, you have no choice but to allow them. This seems like an enormously difficult task at best.

Like an imposing but immovable castle, any defensive measures you set up will eventually be compromised by legions of highly motivated thieves looking to break in. It's a move/countermove game: You adjust, they adapt. That's why you should start with defenses that can adapt and change quickly and effectively as external threats adapt.

First of all, you need to make sure your perimeter defenses are as strong as possible, and that means keeping up with the rapidly evolving threats around you. Gone are the days of relying on a firewall that only performs firewall functions; today's crackers have figured out how to bypass the firewall by exploiting weaknesses in applications. Being merely reactive to attacks and intrusions is not a great option either; It's like waiting for someone to hit you before deciding what to do instead of seeing the approaching punch and moving out of the way or blocking it. You need to be flexible in your approach to the latest technologies, constantly monitoring your defenses to ensure your network's defensive armor can meet the latest threat. To ensure that someone doesn't sneak past something without you noticing, you must have a very dynamic and effective policy to constantly monitor for suspicious activity that can be dealt with quickly when discovered. When this happens it is too late.

This is also a very important component for network administrators: You have to educate your users. No matter how good a job you've done at tightening your network security processes and systems, you still have to deal with the weakest link in your armor: your users. It's no use having bulletproof processes if these processes are so difficult to manage that users skirt around them to avoid the hassle, or if these processes are so loosely structured that a user visiting an infected site could infect your network with the virus. As the number of users increases, the difficulty of securing your network increases significantly.

User training becomes especially important when it comes to mobile computing. Losing a device, using it in a place (or way) where prying eyes might see passwords or data, being aware of hacking tools specifically designed to sniff wireless signals for data, and logging into unsecured networks are all potential problem areas that users should be familiar with.

Know Today's Networking Needs

The traditional approach to network security engineering has been to try to build preventive measures â€" firewalls â€" to protect the infrastructure from intrusions. The firewall acts like a filter, catching anything that looks suspicious and keeping everything behind it as sterile as possible. However, while firewalls are good, they often don't do much in the way of identifying compromised applications that are using network resources. And with the pace of evolution seen in the field of penetration tools, an approach designed solely to prevent attacks will become less and less effective.

Today's IT environment is no longer limited to the office as it used to be. While there are still fixed systems inside the firewall, ever more sophisticated remote and mobile devices are entering the workforce. This influx of mobile computing has expanded the traditional boundaries of the network to increasingly remote locations and required a different way of thinking about network security requirements.

The edge or perimeter of your network is changing and expanding beyond its historical boundaries. Until recently, this endpoint was the user, a desktop system or laptop, and these devices were relatively easier to secure. To use a metaphor: The difference between the extremes of early network design and today's extremes is like the difference between the wars of World War II and the current war on terror. Battles in World War II had very clearly defined "front lines" â€" one side was occupied by the Allied powers, the other by the Allied forces.Purgatory was controlled by the Axis. However, today the war on terrorism has no such front line and is fought in multiple areas with different techniques and strategies customized for each battlefield.

With today's explosion of remote users and mobile computing, the edge of your network is no longer as clearly defined as it once was and is evolving very quickly. So, while having a solid perimeter security system is still a critical part of your overall security policy, your network's physical perimeter can no longer be viewed as your best "last line of defence."

Any policy you develop should be tailored to leverage the power of your unified threat management (UTM) system. For example, firewalls, antivirus, and intrusion detection systems (IDSs) work by trying to block all currently known threats â€" a "blacklist" approach. But threats evolve faster than UTM systems can, so it's almost always a game of catch-up "after the fact." Perhaps a better and more easily managed policy is to specifically state which devices are allowed access and which applications are allowed to run on your network. This "whitelist" approach helps reduce the amount of time and energy required to keep up with the rapidly evolving pace of threat sophistication because you determine what goes in and what you need to keep out.

Any UTM system you use should enable you to do two things: determine which applications and devices are allowed and provide a policy-based approach to managing those applications and devices. It should allow you to protect your critical resources against unauthorized data extraction (or data leakage), guard against the most persistent threats (viruses, malware, and spyware), and thrive with an ever-changing range of devices and applications designed to penetrate your external defenses.

So what is the best strategy for integrating these new remote endpoints? First, you need to realize that these new remote, mobile technologies are becoming increasingly common and are not going away anytime soon. In fact, they likely represent the future of computing. As these devices gain complexity and functionality, they are pulling end users away from their desks and becoming indispensable tools for some businesses. iPhones, Blackberries, Palm Treos, and other smartphones and devices now have the capability to interface with corporate email systems, access networks, run enterprise-level applications, and perform full-featured remote computing. As such, they also pose an increased risk to network administrators due to loss or theft (especially if the device is not protected by a robust authentication method) and unauthorized interception of wireless signals through which data can be siphoned.

To deal with the inherent risks, you need to implement an effective security policy to deal with these devices: under what conditions can they be used, how many of your users will need to use them, what levels and types of access will they have, and how will they be authenticated?

Solutions exist to add strong authentication to users seeking access over wireless LANs. Tokens, a type of hardware or software, are used to identify the user to an authentication server to verify credentials. For example, PremierAccess by Aladdin Knowledge Systems can process access requests from a wireless access point and pass them to the network if the user is authenticated.

One of the first steps you can take to ensure the security of your network while allowing mobile computing is to fully educate users of this technology. Users need to have a firm understanding of the risks their mobile device poses to your network (and ultimately the company at large) and that it is an absolute necessity to pay attention to both the physical and electronic security of the device.

Best Practice for Network Security

So how do you "clean and tighten" your existing network or design a new network that can withstand the inevitable attacks? Let's look at some basics.

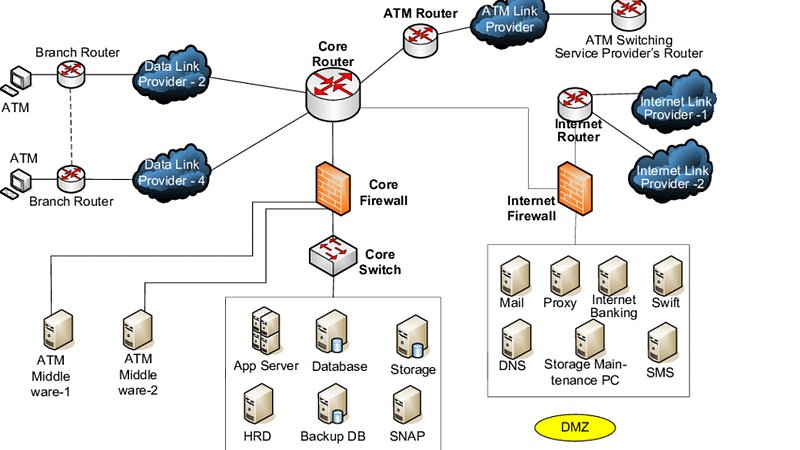

network diagram

The illustration in the figure shows what a typical network layout might look like. outside the DMZthat users approach the network via a secure (HTTPS) Web or VPN connection. They are authenticated by the perimeter firewall and directed to a Web server or VPN gateway. If they are allowed through, they can access resources within the network.

If you're an administrator of an organization with only a few dozen users, managing your task (and illustration layout) will be relatively easy.

But if you need to manage several hundred (or several thousand) users, the complexity of your task increases by orders of magnitude. This makes a good security policy an absolute necessity.

9. Security Policies

Like the tedious prep work before painting a room, organizations need a good, detailed, and well-written security policy. It's not something to be rushed "just

To be done, your security policy must be well thought out; in other words, 'the devil is in the details'. Your security policy is designed to get everyone involved in your network "thinking on the same page."

Politics is almost always a work in progress. It must evolve with technology, especially technologies that aim to sneak into your system. Threats will continue to evolve, as will the systems designed to keep them out.

A good security policy is not always a single document; Rather, it is a collection of policies that address specific areas such as computer and network use, authentication styles, email policies, remote/mobile technology use, and Web browsing policies. While it should be comprehensive, it should be written in a way that is easily understood by those it affects. Accordingly, your policy does not need to be overly complex. If you give new employees something the size of War and Peace and tell them they are responsible for knowing its content, you can expect to have ongoing problems maintaining good network security awareness. Keep it simple.

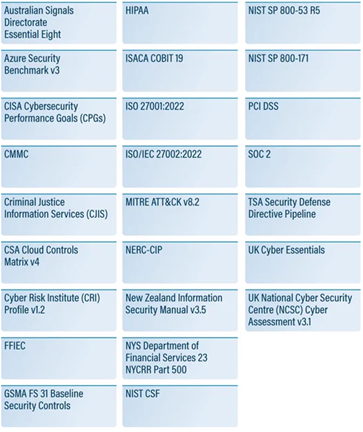

Security Standards

First, you need to prepare some policies that describe your network and its underlying architecture. A good start would be to start by asking the following questions:

- What types of resources need to be protected (user financial or medical data, credit card information, etc.)

- How many users will access the network from inside (employees, contractors, etc.)

- Will access be required only at certain times or 24/7 (and across multiple time zones and/or internationally)?

- What kind of budget do I have?

- Will remote users access the network and, if so, how many?

- Will there be remote sites in geographically distant locations (requires a fail-safe mechanism such as replication to ensure data is synchronized across the network)?

Next, you should specify responsibilities for security requirements, communicate your expectations to your users (one of the weakest links in any security policy), and establish role(s) for your network administrator. It should list policies for activities such as web browsing, downloading, local and remote access, and authentication types. You should cover issues such as adding users, assigning privileges, dealing with lost tokens or compromised passwords, and under what circumstances to remove users from the access database.

You should establish a security team (sometimes called a "tiger team") that will be responsible for creating security policies that are practical, enforceable, and sustainable. They must find the best plan to implement these policies in a way that ensures network resources are both protected and user-friendly. They must develop plans to respond to threats as well as programs to update equipment and software. And there should be a very clear policy for handling changes in overall network security â€" the types of connections that will and will not be allowed through your firewall. This is especially important because you don't want an unauthorized user to gain access, reach your network, and simply retrieve files or data.

10. Risk Analysis

Businesses with e-commerce or confidential activities need to conduct a risk analysis to determine the risks they may face. This analysis should be done taking into account information processing, common access, and similar factors. Depending on the risks, a business may rethink a network design. For a small business,A simple extranet/intranet setup and moderate firewall protection may be sufficient. However, these measures will be insufficient for a company dealing with financial data. In this case, what is needed is a tiered system. At the same time, businesses need to separate the corporate side (email, intranet access, etc.) and a separate, secure network (internet or corporate side). Only a user with physical access can access these networks and data can only be transferred using physical media. These networks can be used for data systems, such as testing or laboratory systems, or for the storage or processing of critical or confidential information. In Department of Defense parlance, these networks are called red networks or black networks.

Vulnerability Testing

Your security policy should include regular vulnerability testing. Some very good vulnerability testing tools such as WebInspect, Acunetix, GFI LANguard, Nessus, HFNetChk and Tripwire allow you to do your own security testing. There are also third-party companies that have a suite of state-of-the-art testing tools that can be contracted to scan your network for open and/or accessible ports, weaknesses in firewalls, and Website vulnerability.

Audits

You should also consider regular and detailed audits of all activities, with emphasis on those that appear close to or outside established norms. For example, audits that reveal high rates of data exchange after normal business hours, where such traffic would not normally be expected, are an issue to be investigated. Perhaps, after checking, you will find that this is nothing more than an employee downloading music or video files. But the important thing is that your monitoring system sees the increase in traffic and determines that it is a simple Internet usage policy violation rather than someone siphoning off more critical data.

There should be clearly established rules for dealing with security, usage and/or policy violations, as well as attempted or actual intrusions. Trying to figure out what to do after it's too late would be too late. And if an intrusion occurs, there must be a clear system to determine the extent of damage; There must be isolation of the exploited application, port or machine and a rapid response to plug the hole against further attacks.

Recovery

It is important to include recovery after an attack in the plan. This includes issues such as restructuring the network and closing the exploited vulnerability. It may take time to detect the entry point. In addition, an estimate of the damage must be made, such as what was taken or seized, whether it was malicious or not. If code is present on the affected system, consideration should also be given to how to most efficiently remove and clean it. If there is a virus in the company's email system, sending and receiving emails may be halted for days. If the attack files have been destroyed, how to reconstruct the network should be discussed. This will often require more than just reinstalling machines from archived backups. Various steps must be taken to speed up the recovery process and not impact analysis efforts. Regular review of the disaster recovery plan is necessary to ensure it meets current needs. It is important that this plan is representative of all departments and should address new threat notifications, software patches, updates, and employee turnover.

11. Security Products

While the tools available for people looking to break into your space are impressive, you also have a wide variety of tools to help you keep them out. But before implementing a network security strategy, you need to be aware of the specific needs of the people who will use your resources. Simple antispyware and antispam tools are not enough. In today's rapidly changing software environment, strong security requires penetration protection, threat signature recognition, autonomous response to identified threats, and the ability to upgrade your tools when needed.

The following discussion talks about some of the more common tools you should consider adding to your arsenal.

Firewalls

Your first line of defense should be a good firewall, or better yet, a system that effectively combines several security features. Sec from Secure Computingure Firewall (formerly Sidewinder) is one of the most powerful and secure firewall products available and, as of this writing, has never been successfully hacked. It is trusted and used by government and defense agencies. Secure Firewall combines the five most essential security systemsâ€"firewall, antivirus/spyware/spam, virtual private network (VPN), application filtering, and intrusion prevention/detection systemsâ€"into a single device.

Intrusion Prevention Systems (IPS)

A good intrusion prevention system (IPS) is a step up from a more advanced firewall and provides the ability to create autonomous policies. IPS decides how to respond to application-level threats as well as simple IP address or port-level attacks. IPS products can automatically drop suspicious packets and, in some cases, place them in a "quarantine" file. IPS can also be considered a layer firewall because it makes decisions regarding application context. For an IPS to be effective, it is important that it is able to detect a signature that does not have a signature (false positive), as well as its ability to distinguish a real threat. When an intrusion is detected, the system must quickly notify the administrator so that appropriate avoidance measures can be taken. There are different types of IPS.

- Network-based. Network-based IPSs create a series of choke points within the organization that detect suspected intrusion activity. Placed where they are needed, these systems invisibly monitor network traffic for known attack signatures and then block it.

- Host-based. These systems reside on servers and individual machines, rather than on the network per se. They silently monitor activities and requests from applications, weeding out actions that are prohibited by nature. These systems are generally very good at detecting post-decryption login attempts.

- Content-based. These IPSs scan network packets, looking for signatures of content that is unknown or unrecognizable, or that is clearly labeled as threatening in nature.

- Rate-based. These IPSs look for activity that falls outside normal levels, such as activity that appears to be related to password cracking and brute force penetration attempts.

When looking for a good IPS, look for one that provides at least the following:

- Strong protection for your applications, host systems, and individual network elements against exploitation of vulnerability-based threats such as "single bullet attacks," Trojans, worms, botnets, and the secret creation of "backdoors" in your network

- Protection against threats that exploit vulnerabilities in certain applications, such as web services, mail, DNS, SQL, and Voice over IP (VoIP) services.

- Detection and elimination of spyware, phishing and anonymizers (tools that allow Internet activities to be carried out secretly by hiding the identity information of the source computer)

- Protection against brute-force and DoS attacks, application scanning and flooding.

- A regular method for updating threat lists and signatures (threat intelligence)

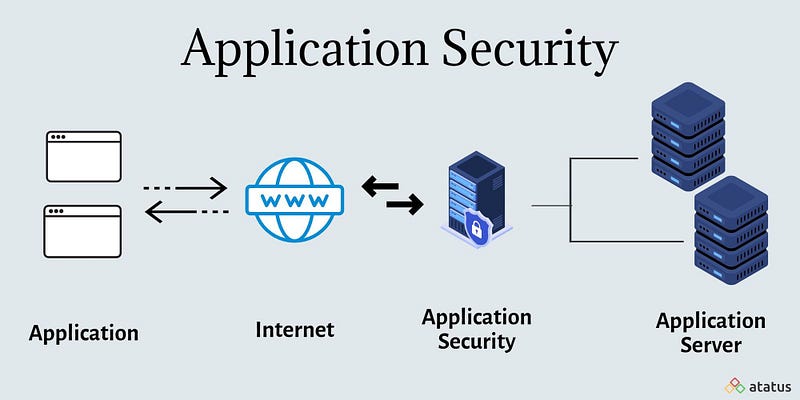

Application Firewalls

Confusion can arise between application firewalls (AFs) and IPSs. AFs are designed to limit or deny access to an application. AFs prevent malicious code from being executed while closing vulnerabilities in an operating system. AFs block data from certain websites or content by analyzing the type of data stream coming from applications. It also detects attempts to exploit vulnerabilities of an application. AFs use proxies to prevent attacks and focus on traditional firewall functions. AFs detect the signatures of recognized threats and prevent them from infecting the network. Some operating systems offer application firewall as a built-in feature. For example, Windows' Data Execution Prevention (DEP) feature prevents malicious code from being executed. MacOS version 10.5.x offers application firewall as standard.

Access Control Systems

Access control systems (ACSs) are based on rules that govern access to protected network resources. These rules control access requests using methods such as tokens or biometric devices for user authentication. Additionally, different network service options may be available depending on time or group needs.They can restrict access to their data. Some ACS products allow you to create a set of access control lists (ACLs), called rules, that define the security policy. These rules may restrict access based on factors such as a specific user, time, IP address, or system logged in. ACS instances such as SafeWord can strengthen network access using two-factor authentication. This system authenticates users using their information (for example, a personal identification number or PIN) and the one-time passwords (OTP) they have. ACSs allow administrators to control access to network resources by defining customized access rules and restrictions. These systems play an important role in security and information security.

Unified Threat Management â€" UTM

Recent trends include the emergence of unified intrusion prevention or UTM systems. UTM systems offer a multi-layered structure by combining multiple security technologies on a single platform. UTM products have a variety of capabilities such as antivirus, VPN, firewall services and also include features such as intrusion prevention and antispam. One of the biggest advantages of UTM systems is their ease of use and configuration and the fact that they can be quickly updated. Sidewinder by Secure Computing is a UTM system with these features. Other UTM systems include Symantec's Enterprise Firewall and Gateway Security Enterprise, Fortinet, LokTek's AIRlok Firewall Appliance, and SonicWall's NSA 240 UTM Appliance. These systems are flexible, fast to update, easy to manage and offer various security features.

12. Controlling User Access

Traditionally, users, also known as employees, have been the weakest link in a company's defense armor. While they are essential to the organization, they can be a nightmare waiting to happen to your network. How do you allow them to operate within the network while controlling their access to resources? You should make sure that your user authentication system knows who your users are.

Authentication, Authorization, and Accounting â€" AAA

Authentication is simply proving that a user's identity claim is valid and genuine. Authentication requires some form of "proof of identity." With network technologies, physical evidence (like a driver's license or other photo ID) cannot be used, so you must obtain something else from the user. This usually means that the user must respond to a challenge to provide real credentials at the moment the user requests access.

For our purposes, credentials can be something the user knows, something they have, or something they are. Once authentication is established, there must also be authorization or login permission. Finally, you want to keep a record of users' logins to your networkâ€"username, login time, and resources. This is the accounting side of the process.

What the User Is

This refers to a user's natural characteristics that are difficult to replicate. Examples include:

- Fingerprint: Unique patterns on a person's fingertips.

- Facial Recognition: Distinctive facial features.

- Voice Recognition: Unique voice patterns.

- Iris Recognition: Complex patterns of the iris in the eye.

What the User Knows

Users often use personal information such as birthdays, anniversaries, first cars, etc. for authentication purposes. However, they do not realize that this information is insecure. This refers only to information that the user should have. It is the most common form of authentication, but it is also the most vulnerable to breaches. examples**:**

- Passwords: Secret character combinations.

- PINs (Personal Identity Numbers): Numeric codes.

- Security Questions: Answers to personal questions.

This type of information is used as hard passwords and PINs in network technologies because they are easy to remember. However, for a password or PIN to be secure, certain conditions are required, such as a minimum number of characters or a mixture of letters and numbers. To reduce costs, some organizations allow users to set their own passwords and attempt to provide access with these weak credentials. However, fixed passwords can be guessed andIt may cause more information that the user already needs to remember. For any security system to be effective, stronger authentication is required.

What the User Has

This category includes physical objects or digital tokens owned by a user. These can be:

- Smart Cards: Physical cards with embedded microchips.

- Security Tokens: Devices that generate one-time passwords.

- Mobile Phones: Used to receive authentication codes or for biometric authentication.

- Digital Certificates: Electronic credentials that verify identity.

Let's Combine

The most secure way to identify users is a combination of (1) a hardware device they own that is "known" to an authentication server on your network and (2) what they know. A number of devices available today â€" tokens, smart cards, biometric devices â€" are designed to identify a user more positively. Since in my opinion a good token is the safest of these options, I focus on them here.

Token is a device using an encryption algorithm and is recognized by the network's authentication server. There are two types of tokens: software and hardware. While software tokens can be installed on a user's desktop or mobile devices, hardware tokens come in different forms, and some work with just a button while others have a more elaborate keypad. Tokens can be easily deactivated if lost or stolen. Tokens generate one-time passwords (OTP), meaning they provide a valid password for every login attempt. Tokens can be programmed on-site or by vendors using programming software. After the programming process is completed, a file containing information about the token and its serial number is transmitted to the authentication server. Tokens are assigned by associating the serial number with the user.

Is the User Authenticated, But Authorized?

Authorization is independent of authentication. A user may be allowed into the network but may not be authorized to access a resource. You wouldn't want an employee to have access to HR information or a corporate partner to confidential or proprietary information.

Authorization requires a set of rules that determine the resources a user can access. These permissions are determined in your security policy

Accounting

Let's assume that our user is granted access to the requested resource. But you want (or in some cases need to have) the ability to call up and view activity logs to see who accessed which resource. This information is mandatory for organizations that handle users' financial or medical information or DoD confidential information, or are undergoing annual audits to maintain certification for international operations.

Accounting means recording, logging and archiving of all server activity, particularly activity related to access attempts and whether they were successful or not. This information should be recorded in audit logs that are stored and available for viewing at any time or whenever you need to view them. Logs must contain at least the following information:

- Identity of the user

- Date and time of the request

- Whether the request passed authentication and was accepted

Any network security system you implement should retain or archive these logs for a specified period of time and allow you to determine how long these archives will be preserved before they begin exiting the system.

Staying Up to Date

Going forward, it is important to keep up with constant developments in the field of network security to avoid being left behind. These advances include new systems for addressing threats through a smarter and more autonomous process, and developing and configuring faster and easier ways to keep threat files up to date. Additionally, customization of access rules, authentication requirements, and user role assignments provides significant flexibility. In this process, it is important to subscribe to newsletters, attend seminars and security fairs, read white papers and get support from network security experts if needed. The price to pay for not cutting corners and staying ahead in terms of security is a security breach or security breach.will be less than the cost resulting from negligence.

13. Conclusion

Protecting the network from intrusions can be a challenging task. When faced with this type of threat, you are dealing with an enemy who may be outnumbered and poorly equipped, just like the cops on the street. This enemy may have increasingly sophisticated tools that can bypass even your best defenses. Therefore, no matter how good your defense is, you need to constantly keep up with developments. The most important way to be successful in network security is to take a logical, thoughtful and agile approach. This means keeping up with technological changes and staying current. It is also important to keep up with technical reports, seminars, security experts, and online resources that address different aspects of network security. It is also important to have a network security policy that is easy to understand. This policy ensures that all users consider their needs to best protect your network. It is also necessary to educate users about network security, because the more they know, the better they can help.

References

John R. Vacca â€" Network and System Security-Syngress (2010)

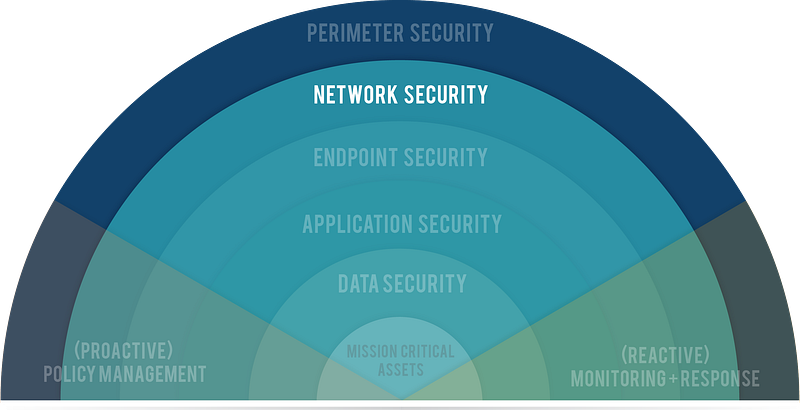

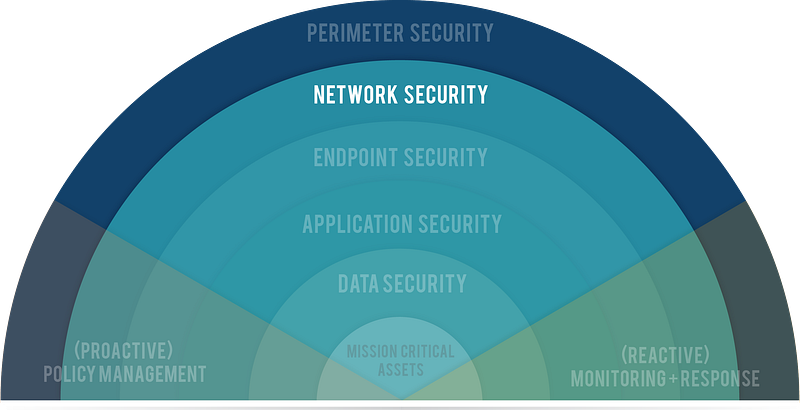

Defense in Depth Strategy

Most security experts agree that perfect network security is impossible and that any defenses can always be bypassed. The defense-in-depth strategy embraces blocking the attacker with multiple layers of defense. He acknowledges that each layer can be overcome. Valuable assets are protected by more layers of defense. The combination of multiple layers increases the cost of success of the attack, which is proportional to the value of the assets protected. Additionally, the combination of multiple layers is more effective than a single optimized defense against unexpected attacks. The cost to the attacker may come in the form of additional time, effort, or equipment. For example, an attacker's delay can increase an organization's chances of detecting and responding to the attack in progress. If the increased costs outweigh the gains from a successful attack, some attempts may be discouraged.

Defense In Depth

Defense in depth is sometimes said to involve people, technology and operations. Trained security personnel must be responsible for the security and information assurance of facilities. However, every computer user in an organization should be made aware of security policies and practices. Every home Internet user should learn about safe practices (such as avoiding opening email attachments or clicking suspicious links) and the benefits of proper protection (antivirus software, firewalls).

Various technological measures can be used for protection layers. These include firewalls, IDSs, access control lists (ACLs), antivirus software, access control, spam filters, etc. should take place.

Defense in Depth

Preventive Measures â€" Proactive Approach

Most computer users are aware that Internet use poses security risks. It makes sense to take precautions to minimize exposure to attacks. Fortunately, several options are available for computer users to reduce risks by strengthening their systems.

1- Access Control (Access Control)

In computer security, access control refers to mechanisms that allow users to perform functions up to the level to which they are authorized and restrict users from performing unauthorized functions. Access control(Access Control) includes:

- Authentication of users (Authentication)

- Authorization of privileges (Authorization)

- Auditing to monitor and record user actions (Auditing)

All computer users will be familiar with some form of access control.

Authentication(Authentication) is the process of verifying a user's identity. Authentication is typically based on one or more of these factors:

- Something the user knows, such as a password or PIN (Something the user knows)

- Something the user has, such as a smart card or token (Something the user has)

- Something personal about the user, such as a fingerprint, retinal pattern, or other biometric identifier (Something the user is)

Using a single factor is considered weak authentication, even if multiple proofs are presented. The combination of two factors such as password and fingerprint, called two-factor (or multi-factor) authentication, is considered strong authentication.

Authorization (Authorization) is the process of determining what an authenticated user can do. Most operating systems have a set of permissions regarding read, write, or execute access. For example, an ordinary user may have permission to read a particular file but not permission to write to the file, whereas a root or superuser will have full privileges to do everything.

Auditing (Auditing) is necessary to ensure that users are held accountable. Computer systems record actions in the system in audit trails and logs. For security purposes, they are invaluable forensic tools for recreating and analyzing events. For example, a user who makes many unsuccessful login attempts may be viewed as an intruder.

2-Vulnerability Testing and Patching

As mentioned before, vulnerabilities are weaknesses in software that can be exploited to compromise a computer. Vulnerable software includes all types of operating systems and application programs. New vulnerabilities are constantly being discovered in different ways. New vulnerabilities discovered by security researchers are usually reported confidentially to the vendor, giving the vendor time to examine the vulnerability and develop a path. 50% of all vulnerabilities disclosed in 2007 could be fixed with vendor patches. Once ready, the vendor will release the vulnerability, hopefully along with a patch.

It has been claimed that publishing vulnerabilities would help attackers. While this may be true, publishing also raises awareness throughout society. System administrators will be able to evaluate their systems and take appropriate action. System administrators may be expected to know the configuration of computers on their network, but in large organizations it will be difficult to keep track of possible configuration changes made by users. Vulnerability testing provides a simple way to obtain information about the configuration of computers on a network.

Vulnerability testing is an exercise in investigating systems for known vulnerabilities. It requires a database of known vulnerabilities, a package generator, and testing routines to create a set of packages to test for a specific vulnerability. If a security vulnerability is found and a software patch is available, the computer should be patched at that time.

3- Closing Ports

Transport layer protocols, namely Transmission Control Protocol (TCP) and User Datagram Protocol (UDP), define applications that communicate with each other through port numbers. Port numbers 1 through 1023 are well-known and are assigned by the Internet Assigned Numbers Authority (IANA) to standardized services running with root privileges. For example, Web servers listen on TCP port 80 for client requests. Port numbers 1024 through 49151 are used by various applications with ordinary user privileges. Port numbers above 49151 are used dynamically by applications.

It is a good practice to close unnecessary ports as attackers can use open ports,

especially those in the higher range. For example, the Sub7 Trojan is known to use port 27374 by default, and Netbus uses port 12345. However, closing ports alone does not guarantee the security of a computer. Some computers must keep TCP port 80 open for HyperText Transfer Protocol (HTTP), but attacks can be carried out through this port.

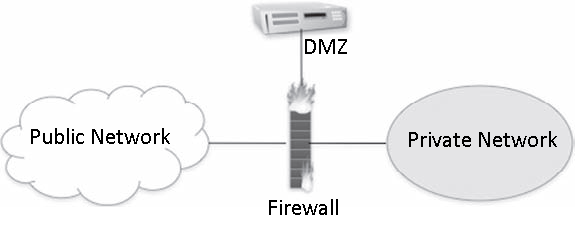

4- Firewalls

When most people think of network security, firewalls are one of the first things that come to mind. Firewalls are a perimeter security tool that protects an internal network from external threats. A firewall selectively allows or blocks incoming and outgoing traffic. Firewalls can be standalone network devices located at the entrance to a private network or personal firewall programs running on computers. An organization's firewall protects the internal community; A personal firewall can be customized to an individual's needs.

A firewall that isolates various network zones.

Firewalls can provide separation and isolation between various network zones, namely the public Internet, private intranets, and a demilitarized zone (DMZ â€" demilitarized zone), as shown in the figure. Semi-protected DMZ typically includes services provided by an organization. Public servers need some protection from the public Internet, so they are usually located behind a firewall. This firewall cannot be completely restrictive because public servers must be accessible from the outside.

There are various types of firewalls: packet-filtering firewalls, stateful firewalls, and proxy firewalls. In any case, the effectiveness of a firewall depends on the configuration of its rules. Properly written rules require detailed knowledge of network protocols. Unfortunately, some firewalls fail due to negligence or lack of training.e is configured incorrectly.

Packet-filtering firewalls analyze packets in both directions and allow or deny passage based on a set of rules. Rules often examine port numbers, protocols, IP addresses, and other attributes of packet headers. There is no attempt to associate multiple packets with a flow or stream. The firewall is stateless, keeping no memory of one packet from another.

Stateful firewalls overcome the limitation of packet filtering firewalls by recognizing packets belonging to the same flow or connection and keeping track of the connection state. They operate at the network layer and recognize the legitimacy of sessions.

Proxy firewalls (Proxy firewalls) are also called application-level firewalls because they operate up to the application layer. They recognize specific applications and can detect whether an unwanted protocol is using a non-standard port or if an application layer protocol is being abused. They protect an internal network by serving as primary gateways for proxy connections from the internal network to the public internet. Due to the nature of the analysis, they may have some impact on network performance.

Firewalls are essential elements of an overall defense strategy, but they have the disadvantage that they only protect the perimeter. They are useless if an attacker has a way to bypass the perimeter. They are also useless against insider threats originating from a private network.

5- Antivirus and Antispyware Tools

The proliferation of malicious software creates the need for antivirus software. Antivirus software was developed to detect the presence of malware, identify its nature, remove malicious software (disinfect the computer), and protect a computer from future infections. Detection should ideally minimize false positives (false alarms) and false negatives (missed malware) simultaneously. Antivirus software faces a number of challenges:

- Malware tactics are complex and constantly evolving.

- Even the operating system on infected computers cannot be trusted.

- Malware can reside entirely in memory without affecting files.

- Malware can attack antivirus processes.

- The processing load of the antivirus software cannot reduce computer performance in a way that causes users to become annoyed and close the antivirus software.

One of the simplest tasks performed by antivirus software is file scanning. This process compares bytes in files with known signatures, which are byte patterns indicative of known malware. It represents the general approach of signature-based detection. When new malware is captured, it is analyzed for unique characteristics that can be identified in a signature. The new signature is distributed as an update to antivirus programs. The antivirus looks for the signature when scanning the file, and if a match is found, the signature specifically identifies malware. However, this method has significant drawbacks: Developing and testing new signatures takes time; users must keep their signature files up to date; and new malware without a known signature may not be detected.

Behavior-based detection is a complementary approach. Rather than addressing what the malware is, behavior-based detection looks at what the malware is trying to do. In other words, anyone who attempts a risky action will fall under suspicion. This approach overcomes the limitations of signature-based detection and can find new malware without a signature, just based on its behavior. However, this approach may be difficult in practice. First, we need to define what is suspicious behavior or, conversely, what is normal behavior. This definition often relies on heuristic rules developed by security experts because it is difficult to precisely define normal behavior. Second, it may be possible to distinguish suspicious behavior, but identifying malicious behavior is much more difficult because bad faith must be inferred. When behavior-based detection flags suspicious behavior, further follow-up investigation is often required to better understand threat risk.

ZaThe ability of malware to change or hide their appearance can defeat file scanning. However, regardless of its form, malware must ultimately do its job. Therefore, if malware is given a chance to work, there will always be an opportunity to detect it from its behavior. Antivirus software will monitor system events such as hard drive access to look for actions that may pose a threat to the computer. Events are monitored by capturing calls to operating system functions.

While monitoring system events is a step beyond scanning files, malicious programs run in the computer execution environment and can pose risks to the computer. The idea of ​​emulation is to run suspicious code in an isolated environment, present a view of computer resources to the code, and look for actions that are indicative of malware.

Virtualization takes emulation one step further and executes suspicious code within a real operating system. A number of virtual operating systems can run on top of the host operating system. Malware can corrupt a virtual operating system, but for security reasons a virtual operating system has limited access to the computer operating system. A "sandbox" isolates the virtual environment from interference with the computer environment unless a specific action is requested and allowed. In contrast, emulation does not expose an operating system to questionable code; the code is allowed to run step by step, but in a controlled and constrained way, just to discover what it will try to do.

Anti-spyware software can be viewed as a special class of antivirus software. Slightly different from traditional viruses, spyware can be particularly harmful when it comes to making numerous changes to hard disk and system files. Infected systems tend to have a large number of spyware programs installed, possibly including certain cookies (bits of text placed in the browser by websites for the purpose of keeping them in memory).

6- Spam Filtering

Every Internet user is familiar with spam email. There is no consensus on an exact definition of spam, but most people agree that spam is unsolicited, mass sent, and commercial in nature. There is also consensus that the vast majority of emails are spam. Spam remains a problem because a small portion of recipients respond to these messages. Although this percentage is small, the revenue generated is enough to make spam profitable because the cost of sending spam in bulk is low. A particularly large botnet can quickly generate enormous amounts of spam.

Yahoo! Users of popular Webmail services such as Webmail and Hotmail are attractive targets for spam because their addresses can be easy to guess. In addition, spammers collect email addresses from various sources: websites, newsgroups, online directories, data-stealing viruses, etc. Spammers may also purchase address lists from companies looking to sell customer information.

Spam is more than just an inconvenience to users and a waste of network resources. Spam is a popular tool for distributing malware and redirecting to malicious Web sites. It is the first step of phishing attacks.

Spam filters work on a corporate and personal level. At the enterprise level, email gateways can protect an entire organization by scanning incoming messages for malware and blocking messages from suspicious or fraudulent senders. One concern at the corporate level is the rate of false positives, which are legitimate messages mistaken for spam. Users whose legitimate mail is blocked may be upset. Fortunately, spam filters can often be customized, making the rate of false positives very low. Additional spam filtering at a personal level can further customize filtering to account for individual preferences.

7- Honeypots

The basic idea of a honeypot is to learn about attacker techniques by attracting attacks against a seemingly defenseless computer. It is essentially a forensic tool rather than a line of defense. A honeypot can be used to gain valuable information about attack methods used elsewhere or about imminent attacks before they happen. Honeypots are routinely used in research and production environments.

A honeypot has more specific requirements than a regular computer. First, a honeypot mIt should not be used for legitimate services or traffic. As a result, any activity seen by the honeypot will be illegitimate. For example, although honeypots generally record little data compared to IDSs, there is very little "noise" in their data while the bulk of IDS data is generally uninteresting from a security perspective.

Second, a honeypot must have comprehensive and reliable capabilities to monitor and record all activities. The forensic value of a honeypot depends on the detailed information it can capture about attacks.

Third, a honeypot must be isolated from the actual network. Since honeypots are intended to attract attacks, there is a real risk that the honeypot will be hijacked and used as a launch pad to attack more computers on the network.

Honeypots are generally classified according to their level of interaction, from low to high. Low-interaction honeypots like Honeyd provide simple services. An attacker could try to compromise the honeypot, but he wouldn't gain much. Having limited interactions creates the risk that the attacker will discover that the computer is a honeypot. At the other end of the range, highly interactive honeypots behave more like real systems. They have greater ability to engage an attacker and record activities, but provide greater gain when compromised.

8- Network Access Control (NAC â€" Network Access Control)

A vulnerable computer can put not only itself but the entire community at risk. First of all, a vulnerable computer can attract attacks. If compromised, the host can be used to launch attacks against other hosts. The compromised computer may provide information to the attacker, or there may be trust relationships between computers that could help the attacker. In any case, it is undesirable to have a poorly protected computer in your network.

Network Access Control

The general idea of network access control (NAC) is to restrict a host's access to a network unless the computer can provide evidence of a strong security posture. The NAC process involves the computer, the network (usually routers or switches and servers), and a security policy, as shown in the figure.

In some implementations, a software agent runs on the computer, collects information about the computer's security posture, and reports it to the network as part of the network admission request. The network consults a policy server to compare the host's security posture with its security policy to make an acceptance decision.

The acceptance decision can be anything from rejection to partial acceptance or full acceptance. The rejection may occur due to outdated antivirus software, an operating system that requires patching, or firewall misconfiguration. Rejection may lead to quarantine (redirection to an isolated network) or forced remediation

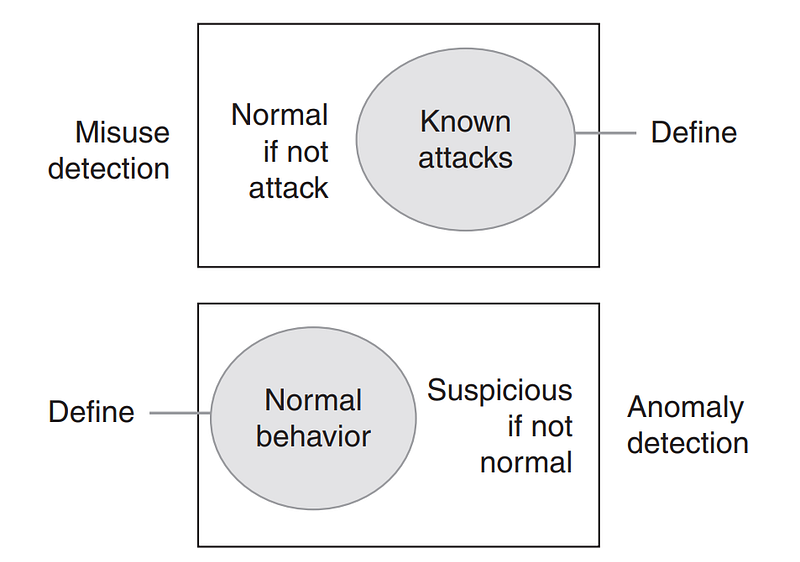

Monitoring and Detection â€" Reactive Approach

Preventive measures, a reactive approach, are necessary and help reduce the risk of attacks, but it is practically impossible to prevent all attacks. Similar to a burglar alarm, intrusion detection is also necessary to detect and diagnose malicious activity. Intrusion detection is essentially a combination of monitoring, analysis, and response. Typically an IDS supports a console for human interface and display. Monitoring and analysis are often viewed as passive techniques because they do not interfere with ongoing activities. The typical IDS response is a warning to system administrators who may choose to pursue or not pursue further investigation. In other words, traditional IDSs don't offer much response beyond alerts, under the assumption that security incidents require human expertise and judgment for follow-up.

IDS approaches can be categorized in at least two ways. One way is to distinguish between host-based and network-based IDSs depending on where detection is done. While a host-based IDS monitors a single computer, a network-based IDS operates on network packets. Another way to view IDSs is through analysis approaches. Traditionally, the two analysis approaches are abuse (signature-based) detection and anomaly (behavior-based) detection.

Misuse detection and anomaly detection

As shown in the figure, these two views are mutually exclusive.They are complementary to each other and are often used together.

In practice, intrusion detection faces several challenges: signature-based detection can only recognize events that match a known signature; behavior-based detection relies on an understanding of normal behavior, but "normal" can vary greatly. Attackers are clever and evasive; attackers may attempt to jam the IDS with fragmented, encrypted, tunneled, or junk packets; an IDS may not respond to an event in real time or quickly enough to stop an attack; and events can occur anywhere at any time, requiring continuous and comprehensive monitoring with correlation of multiple distributed sensors.

1- Host-Based Monitoring

Computer-based IDS runs on a computer and monitors system activities for signs of suspicious behavior. Examples could be changes to the system Registry, repeated failed login attempts, or the installation of a backdoor. Host-based IDSs typically monitor system objects, processes, and memory regions. For each system object, IDS typically keeps track of attributes such as permissions, size, modification dates, and hashed contents to recognize changes.

One concern for a computer-based IDS is possible tampering by an attacker. If an attacker gains control of a system, IDS cannot be trusted. Therefore, special tamper protection of IDS must be designed in a computer.

A computer-based IDS alone is not a complete solution. While monitoring the computer makes sense, it has three significant drawbacks: visibility is limited to a single computer; The IDS process consumes resources, possibly affecting performance on the computer; and attacks will not be seen until they reach the computer. Computer-based and network-based IDS are often used together to combine strengths.

2- Network-Based/Traffic Monitoring

Network-based IDSs typically monitor network packets for signs of reconnaissance, exploitation, DoS attacks, and malware. They have strengths that complement host-based IDSs: network-based IDSs can see the traffic of a population of hosts; can recognize patterns shared by multiple hosts; and they have the potential to see attacks before they reach hosts.

IDSs that monitor various network zones.

IDSs are placed in various locations for different views as shown in the Figure. An IDS outside the firewall is useful for gaining information about malicious activity on the Internet. An IDS in the DMZ will see Internet-borne attacks that can pass through the external firewall and reach public servers. Finally, an IDS in the private network is necessary to detect attacks that can successfully bypass perimeter security.

3-Intrusion Prevention Systems (IPS)

IDSs are passive techniques. They usually notify the system administrator to investigate further and take appropriate action. If the system administrator is busy or the incident takes time to investigate, the response may be slow.

A variation called an intrusion prevention system (IPS) attempts to combine the traditional monitoring and analysis functions of an IDS with more active automated responses, such as automatically reconfiguring firewalls to block an attack. An IPS aims to provide a faster response than humans can achieve, but its accuracy depends on the same techniques as traditional IDS. The response must not harm legitimate traffic, so accuracy is critical.

4- Reactive Measures

Once an attack is detected and analyzed, system administrators must provide an appropriate response to the attack. One of the principles in security is that the response should be proportionate to the threat. Obviously, the answer will depend on the circumstances, but a variety of options are available. In general, it is possible to block, slow down, modify or redirect malicious traffic. It is not possible to identify every possible answer. We will explain only two answers here: quarantine and backtracking.

- Quarantine: Dynamic quarantine in computer security is similar to quarantine for infectious diseases. Particularly in the context of malware, preventing an infected computer from infecting other computers is an appropriate response.

- Backtracking: One critical aspect of an attack is the identity or location of the perpetrator. Unfortunately, finding an attacker in IP networks is almost impossible because:Â

- The source address in IP packets can be easily spoofed (spoofed)

- By their design, routers do not keep track of transmitted packets.

- Attackers can use a number of intermediary computers (called zombies) to carry out their attacks.

Agents are often innocent computers that are compromised by an exploit or malware and placed under the control of the attacker. In practice, it may be possible to trace an attack to the nearest agent, but it may be too much to expect to trace an attack to the actual attacker.

Defense in Depth

We can summarize the layers as follows:

- Network Protection: Firewalls, IPS/IDS, NDR. NAC

- Application Protection: WAF, Application Logs, Updates

- Endpoint Protection: Antivirus/Antimalware, Antiphishing/Mail Security, EPP, EDR, syslog/eventlog, Patching

- Data Protection: DLP, ACL

There is a SIEM that brings it all together.

Result

To protect against network intrusions, we must understand a variety of attacks, from exploits to malware to social engineering. Direct attacks are common, but a class of phishing attacks has emerged that rely on baits to lure victims to a malicious Web site. Phishing attacks are much harder to detect and somehow defend against. Almost anyone can become a victim.

Much can be done to strengthen computers and reduce the risks they are exposed to, but some attacks are inevitable. Defense in depth is the most practical defense strategy that combines layers of defense. While each layer of defense is flawed, the cost becomes more difficult for intruders to overcome.

References

John R. Vacca â€" Network and System Security-Syngress (2010)

Cyber ​​Defense Strategies Against Zero-Day Attacks

In this article, I will talk about how a cyber defense strategy can be developed against zero-day attacks, which are generally accepted to be difficult to detect and prevent. Of course, we cannot wait empty-handed against the zero-day attacks that many institutions suffer from. What kind of defense infrastructure do we need to establish? Let's have a little discussion.

What are Zero-Day Attacks?

First, let's understand what exactly zero-day attacks and vulnerabilities are and why they are difficult to detect.